Technologies for Automatic Speech Recognition (ASR) are advanced significant, yet remarkable differences are still in their ability to accurately recognize different languages. Prominent ASR systems, such as Openai’s Whisper, exhibit pronounced performance holes when treating eastern languages compared to Western colleagues. This discrepancy presents specific challenges in multilingual regions, especially those characterized by several dialects and linguistic variations, which emphasizes the need for sophisticated multilingual ASR systems specifically tailored to Eastern languages.

Researchers from DataOcean AI and Tsinghua University have introduced Dolphin, a comprehensive multilingual automatic speech recognition model built on an expanded whispering architecture, optimized to meet a wider spectrum of Eastern languages and dialects. Dolphin effectively addresses key restrictions identified in current multilingual ASR models by integrating both proprietary data sets and publicly available data sets. The model skillfully supports 40 eastern languages from East Asia, South Asia, Southeast Asia and the Middle East as well as 22 different dialects of Chinese.

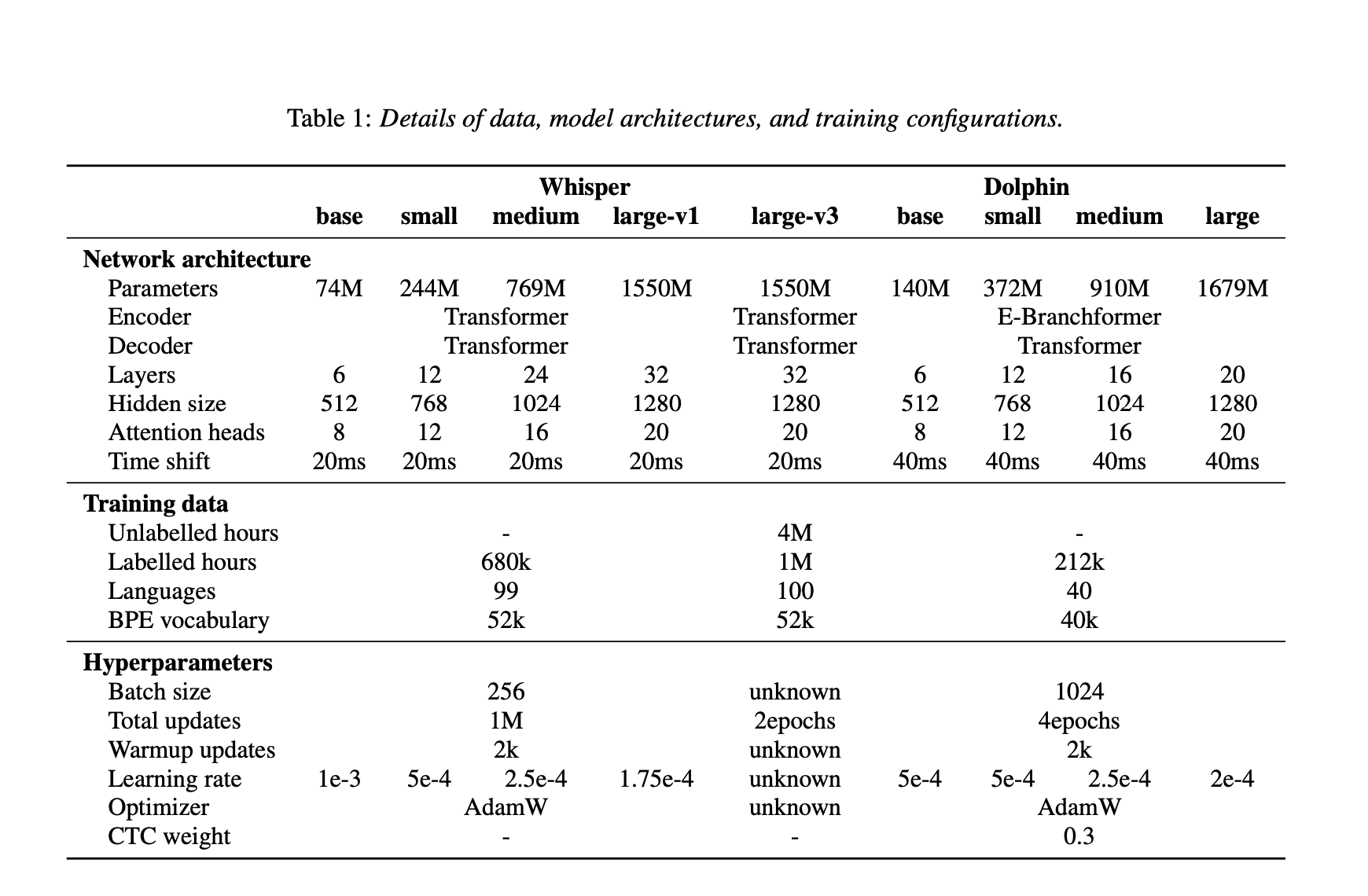

Dolphin uses a hybrid ASR approach that combines the Connectionist Temporal Classification (CTC) with attention-based mechanisms. Its architecture contains an e-Granform codes and a transformer codes, which improves the model’s ability to interpret complex linguistic patterns across different languages. Dolphin also uses a double-level language tookenization system that separates general language codes from region-specific dialect tokens. This mechanism improves recognition accuracy and resolution, especially for dialect -intensive languages such as Chinese. In addition, Dolphin incorporates a 4 × subamp layer to effectively reduce input sequence lengths, which improves calculation speed and exercise efficiency without compromising on recognition accuracy.

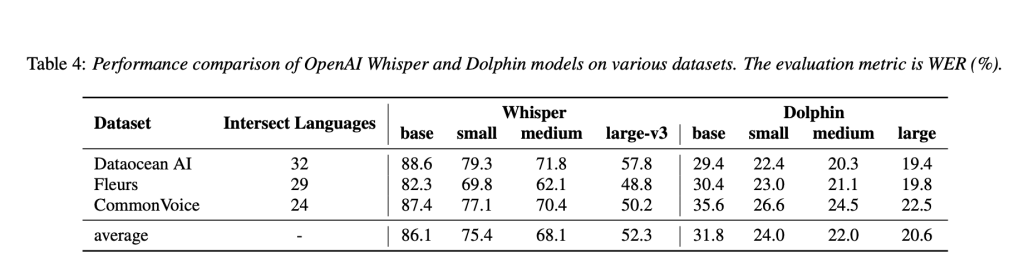

Experimental evaluations demonstrate Dolphin’s significant improvements in multilingual speech recognition accuracy in relation to whisper models. For example, the dolphin small model reduced word error speed (WER) by approx. 24.5% compared to the base model, with further step -by -step improvements observed in medium and large variants. Specifically, the dolphin base model achieved an average WER of 31.8%, especially better than Whisper’s Store-V3 model, which recorded an average WER of 52.3% over the same evaluation-benchmarks. Evaluations performed on dialect -focused data sets, including Kespeech, confirmed Dolphin’s ability to consistently handle intricate linguistic variations, with benefit improvements that correlate positively with increased model size.

The research team released the dolphin base and small models publicly under the Apache 2.0 license together with associated inferences code. Dolphin’s training used a comprehensive data set that included 21.2 million hours of audio recordings incorporating 7.4 million hours derived from open data sets such as a regular voice, Reazonspech and Gigapeech2, ensuring robustness and replicability.

In summary, Dolphin is a significant development in multilingual ASR technology, which systematically addresses prevailing limitations in Eastern language and dialect recognition through methodological data integration, refined architectural framework and commitment to open source dissemination. This work sets an influential benchmark for future development in multilingual ASR research, promotes linguistic inclusive and system’s generalization.

Check out The paper, the dolphin-small model and the dolphin-base model. All credit for this research goes to the researchers in this project. You are also welcome to follow us on Twitter And don’t forget to join our 85k+ ml subbreddit.

🔥 [Register Now] Minicon Virtual Conference On Open Source AI: Free Registration + Certificate of Participation + 3 Hours Short Event (12 April, at [Sponsored]

Asif Razzaq is CEO of Marketchpost Media Inc. His latest endeavor is the launch of an artificial intelligence media platform, market post that stands out for its in -depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts over 2 million monthly views and illustrates its popularity among the audience.